Hey there, welcome back to the blog! Always great to have you here again. I'm Martin, a food...

Why AI Benchmarks Miss What Actually Matters for Regulatory Work

Hi! My name is Jakub, and today I want to talk about something we've been working on quietly at Prodeen for a while: how we actually measure whether AI can do real regulatory work.

Everyone is racing to benchmark intelligence. Reasoning scores. Math olympiads. Coding evals. The models are genuinely impressive and getting better fast.

But those benchmarks don't measure whether the model can actually do the job.

A Simple Test That Exposed a Big Problem

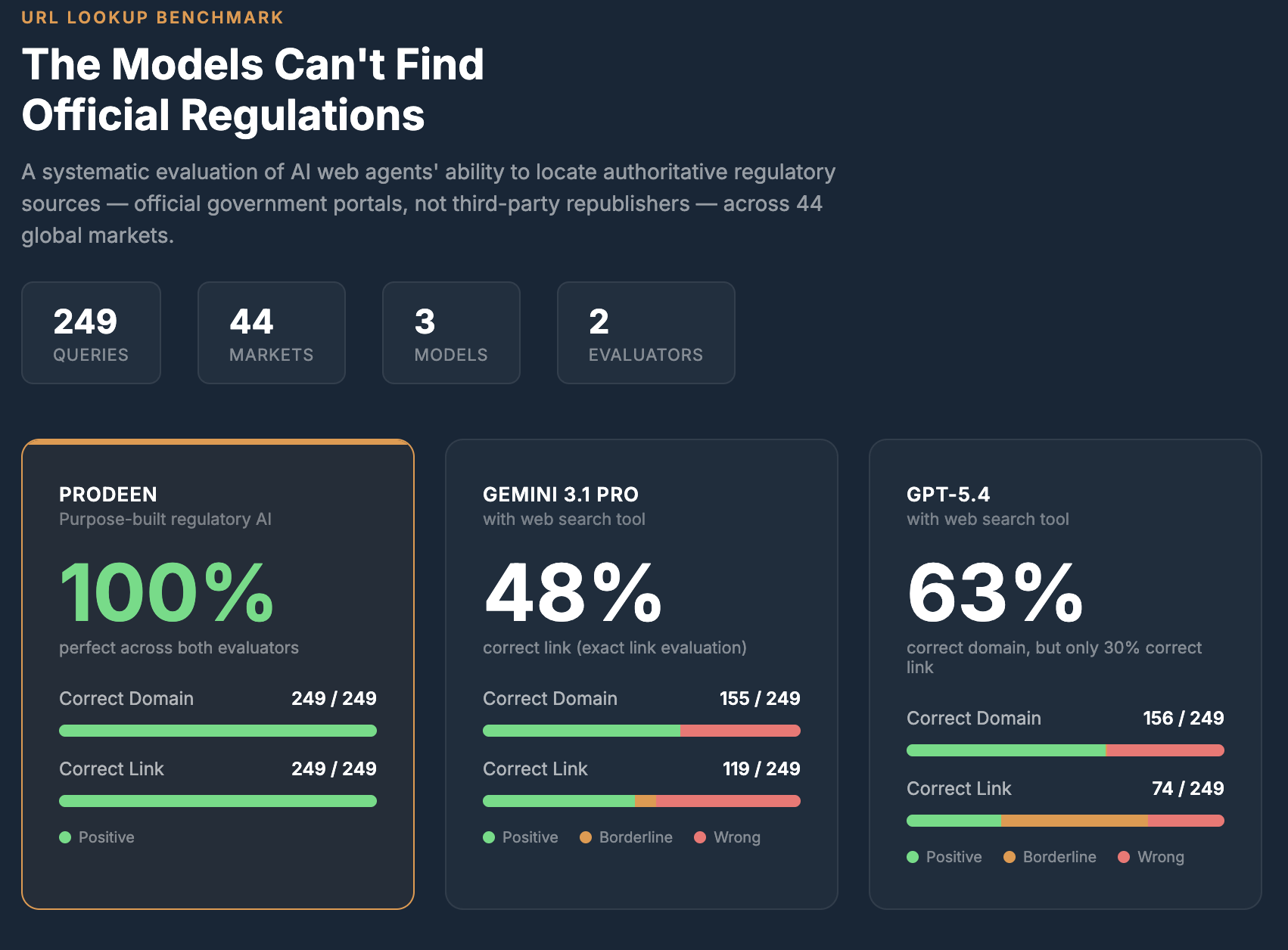

We ran a straightforward evaluation. Give three frontier models a regulation name, a market, and web search access. One task: find the official government document.

The results were striking.

Prodeen's purpose-built regulatory AI scored 100% across both evaluators. Gemini 3.1 Pro got the right domain 48% of the time. GPT-5.4 managed 63% on domain accuracy — but only 30% when we also checked whether the link resolved to the correct, consolidated version of the regulation.

These aren't weak models. They represent the frontier of general AI capability.

The problem isn't intelligence. It's that finding authoritative regulatory sources requires knowing where to look, which version is current and consolidated, and whether you've actually found the real thing or a third party republishing it.

That's not a capability gap. It's a harness gap.

Two Distinct Ways Models Fail

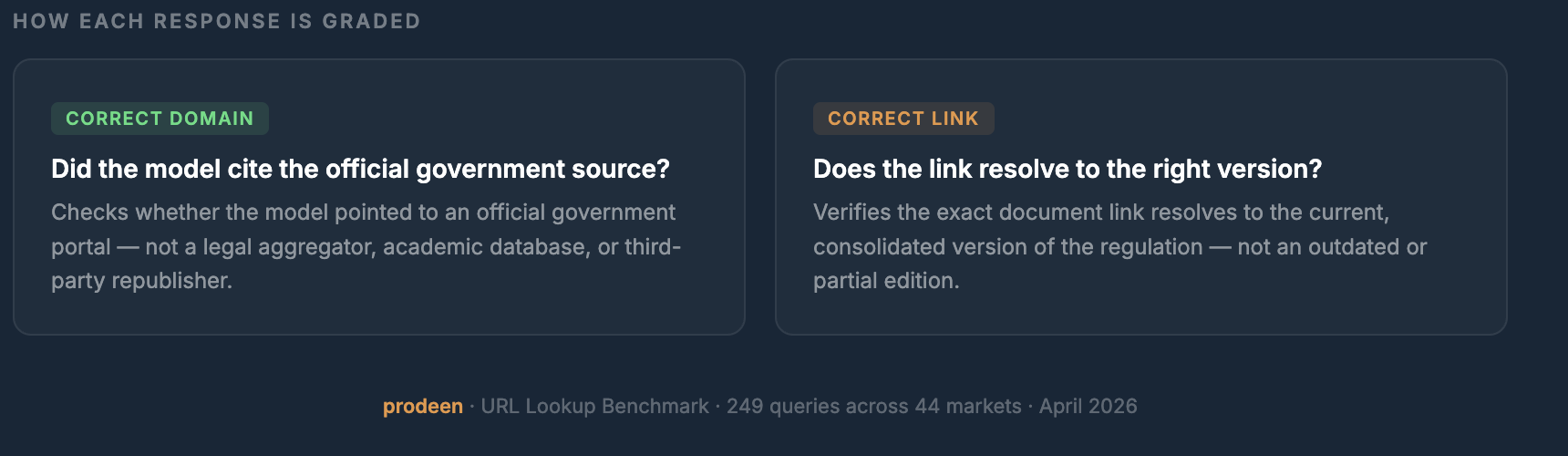

Each response in our benchmark is graded by two independent evaluators targeting different layers of quality. And the failure patterns are revealing.

Pointing to third-party republishers. Models frequently surface regulatory documents from legal aggregators, academic databases, or news sites — rather than the original official government portal. The information may be correct, but it is not authoritative, may lag in versioning, and cannot be relied upon for compliance decisions.

Right portal, wrong document version. Even when models land on the correct official domain, they often link to an outdated, non-consolidated, or otherwise wrong version of the document. The regulation might look technically correct, but for regulatory compliance, version matters.

What's Behind Prodeen's 100% Score

This benchmark is a sub-sample of one of our evaluation datasets. Behind it is a lot of unglamorous work: building the right verification logic, sourcing ground truth across 44 markets, and running these evaluations continuously as models and regulations change.

The evaluation covered 249 queries across jurisdictions in the Americas, Asia Pacific, Europe, the Middle East, and international bodies. In every single market, Prodeen achieved a perfect score on both evaluators.

It's a small peek into everything that goes into making the Prodeen harness actually perform on real tasks.

Why This Matters for Regulatory Teams

If you work in Regulatory Affairs, Quality, or Compliance, you already know the stakes. When you need an official regulation, you need the real one — from the right government portal, in the current consolidated version, with the correct link.

General-purpose AI models are powerful tools. But regulatory source retrieval is not a general-purpose problem. It requires deep domain context: knowing the structure of each country's legislative system, understanding how regulations are amended and consolidated, and verifying that what you've found is genuinely authoritative.

The models will keep getting smarter. But intelligence without the right harness is still a coin flip.

Jakub